- Home

- About Us

- Work

- Journal

- Contact

- Caffeinated root beer

- Winamp skins

- Fnaf security breach ps4 gameplay

- Dual application wizard

- Powerpoint 2013 where are picture shapes

- Home designer pro 7-0 download

- Hand mirror for professionals

- Power suite train car fallout 4

- Does simcity 4 deluxe edition have rush hour in it

- Universal database for dental eligibility

- Home

- About Us

- Work

- Journal

- Contact

- Caffeinated root beer

- Winamp skins

- Fnaf security breach ps4 gameplay

- Dual application wizard

- Powerpoint 2013 where are picture shapes

- Home designer pro 7-0 download

- Hand mirror for professionals

- Power suite train car fallout 4

- Does simcity 4 deluxe edition have rush hour in it

- Universal database for dental eligibility

Multimodal interfaces have been commercialized extensively for field and mobile applications during the last decade. From a system development viewpoint, this book outlines major approaches for multimodal signal processing, fusion, architectures, and techniques for robustly interpreting users' meaning. It describes the data-intensive methodologies used to envision, prototype, and evaluate new multimodal interfaces. This book explains the foundation of human-centered multimodal interaction and interface design, based on the cognitive and neurosciences, as well as the major benefits of multimodal interfaces for human cognition and performance. They have shifted the fulcrum of human-computer interaction much closer to the human. Multimodal interfaces support mobility and expand the expressive power of human input to computers. Finally, we present an expert user case study that examines the WOZ Recognizer’s usability.ĭuring the last decade, cell phones with multimodal interfaces based on combined new media have become the dominant computer interface worldwide.

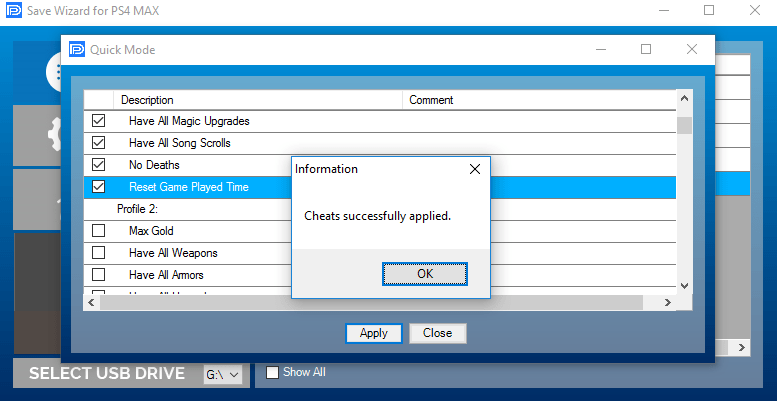

Dual application wizard how to#

In addition, we discuss how sketches are altered, how to control the WOZ Recognizer, and how users interact with it. We present the design of the WOZ Recognizer and our process for representing recognition domains using graphs and symbol alphabets. In order to solve this problem, we developed a Wizard of Oz sketch recognition tool, the WOZ Recognizer, that supports controlled recognition accuracy, multiple recognition modes, and multiple sketching domains for performing controlled experiments. Since sketch recognition mistakes are still common, it is important to understand how users perceive and tolerate recognition errors and other user interface elements with these imperfect systems. However, designing and building an accurate and sophisticated sketch recognition system is a time-consuming and daunting task. Sketch recognition has the potential to be an important input method for computers in the coming years, particularly for STEM (science, technology, engineering, and math) education.

This type of simulation capability enables a new level of flexibility and sophistication in multimodal interface design, including the development of implicit multimodal interfaces that place minimal cognitive load on users during mobile, educational, and other applications. Furthermore, the interactions they handled involved naturalistic multiparty meeting data in which high school students were engaged in peer tutoring, and all participants believed they were interacting with a fully functional system. While using this dual-wizard simulation method, the wizards responded successfully to over 3,000 user inputs with 95-98% accuracy and a joint wizard response time of less than 1.0 second during speech interactions and 1.65 seconds during pen interactions. We illustrate the performance of the simulation infrastructure during longitudinal empirical research in which a user-adaptive interface was designed for implicit system engagement based exclusively on users' speech amplitude and pen pressure. To accomplish these objectives, this new environment also is capable of handling (3) dynamic streaming digital pen input. This simulation also supports (2) transparent user training to adapt their speech and pen signal features in a manner that enhances the reliability of system functioning, i.e., the design of mutually-adaptive interfaces. In the new infrastructure, a dual-wizard simulation environment was developed that supports (1) real-time tracking, analysis, and system adaptivity to a user's speech and pen paralinguistic signal features (e.g., speech amplitude, pen pressure), as well as the semantic content of their input. This high-fidelity simulation infrastructure builds on past development of single-wizard simulation tools for multiparty multimodal interactions involving speech, pen, and visual input. The present paper reports on the design and performance of a novel dual-Wizard simulation infrastructure that has been used effectively to prototype next-generation adaptive and implicit multimodal interfaces for collaborative groupwork.